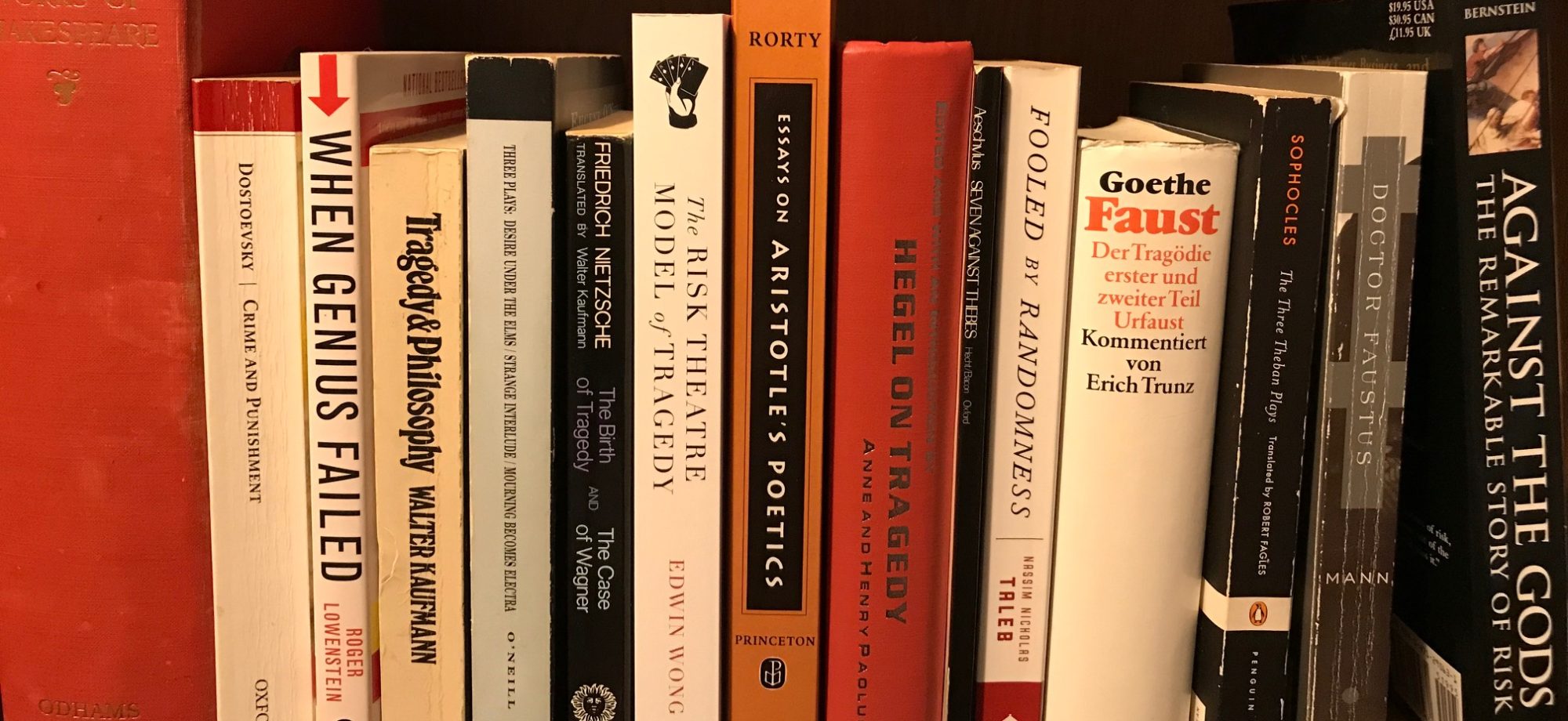

Edwin Wong here, founder of the Risk Theatre Modern Tragedy Playwriting Competition. I’m delighted to announce that Dallas playwright Franky D. Gonzalez’s boxing play: That Must be the Entrance to Heaven or, The Dawn Behind the Black Hole is the winner of the 2022 competition. The prize includes $10,200 in cash and a workshop culminating in a staged reading over Zoom. The competition is going strong, now in its fifth year (risktheatre.com). Its goal is to invite playwrights to dramatize risk, otherwise known as unexpected low-probability, high-consequence events. In 2018, I founded the competition because I’m fascinated with risk. Risk shapes our lives. Risk shapes our lives because it is not what we think will happen, but what we least expect that has the greatest impact. It’s not the calculated risks that matter, but rather the uncalculated risks. Lots of people don’t get that. They think they can do away with risk with insurance, diversification and hedging strategies, clever planning, and so on. To remind people of the true power of risk, there is a dramatic art form in theatre known as “tragedy.” In two books—The Risk Theatre Model of Tragedy (2019) and When Life Gives You Risk, Make Risk Theatre (2022, cowritten with past winners)—I overturned the traditional reading of tragedy that has a hero make a mistake and lose all. In the risk theatre reading, the hero actually has quite a good, foolproof plan. But then something unexpected happens: an unforeseen chance event like Birnam Wood coming to Dunsinane Hill or meeting a man not of woman born. Because the hero has taken on too much risk, the hero is exposed to the highly improbable. It’s the opposite of the old folk saying to “keep some powder dry.” Because the hero has “burnt up every last match,” when the shit hits the fan, well, the hero is done like dinner. Now, it is easy to write two books about how risk is the dramatic fulcrum of the action. But does the risk theatre theory of tragedy work on the actual stage? That question remained unanswered. And so the competition. The cool thing about the risk theatre theory is that the competition will be the proof of the pudding. If the competition, in the next thirty years, can produce a number of plays that enter the canon—that is to say, plays that will stand shoulder to shoulder with Aeschylus, Shakespeare, O’Neill, and the other tragedians of repute, then it can be said that risk theatre works. Literary theories, just like scientific theories, must go through the chicane of the scientific method.

Every year I sit in anticipation, praying that one of the risk theatre winners or finalists can take their play to the next level: audiences queueing in long lines, sold out shows, people talking in the streets. But to take it to the next level is hard. First, the play must be great. It must be unique. From the inception of the competition to this day, there have been great winners and great plays, beginning with Gabriel Jason Dean’s In Bloom, to Nicholas Dunn’s The Value and Madison Wetzell’s The Lost Ballad of Our Mechanical Ancestor. And now Gonzalez’s That Must be the Entrance to Heaven. But, for a play to make it, it has to be more than great. At this point, luck is involved. The right person has to hear about it. They have to have time to explore it. Then the right theatre has to hear about it. Someone has to take a chance on the play. Should we produce a Shakespeare or an Ibsen or a Miller that is guaranteed to sell out so many seats or take a chance on a new play? There are many obstacles. To put it in terms of probability theory—another pastime of mine—there are many paths to failure, and few paths to success. One way, however, to increase a play’s chances is to write about it. And that is what I’m going to do here. I’m going to write about Gonzalez’s That Must be the Entrance to Heaven as though it were already a classic, as though it had already entered the playwriting canon. And yes, if I were writing an essay on Shakespeare or Sophocles, it would go without saying that there are going to be spoilers. So, too, there will be spoilers in this essay.

Although by no means equal repute attends those who write about the great playwrights and the great playwrights themselves, those who write about the great playwrights also play an important role because one of the things that makes a play great is the volume of discussion and scholarship behind it. That this is a boxing play itself is a topic that can lead to discussion. When I think of theatre, boxing isn’t the first thing that comes to mind. Theatre is perceived as a high art. Boxing is perceived to be a low art. The people that partake of theatre likely do not cross over with the boxing community. The same is true vice-versa: the theatre isn’t the place where you would expect to bump into Arturo Gatti, Mickey Ward, Floyd Mayweather, Thomas “The Hitman” Hearns, or the other fighters that Gonzalez references in his play. Gonzalez, by putting together boxing and theatre, is bringing together different worlds with different outlooks not only about life, but about each other.

There is a quartet of main characters in Entrance to Heaven: Edgar, Juan, Manuel, and Armando. They’ve all sacrificed big time for a shot at the title. Edgar is an undocumented fighter who’s fighting to stay in the country. He’s also fighting for the memory of his mother, who died getting him into the country. Juan is fighting to keep his family afloat, to make good all his broken promises he made to his wife and his child. Manuel is fighting to emerge from his brother’s shadow, a former five-division and pound-for-pound champ. Armando defected from Cuba and left his family to become world champion. They all have ghosts. Family members who no longer talk to them or have passed away. Entrance to Heaven, in this light, is about the price you pay. The weight of your dreams like a gravity crushing you down. What happens in the play is beautiful and unexpected: it is when they fail and fall short of their dreams that they feel the lightness of an inner peace.

The first and most crucial question that must be asked is: how do you play the quartet of boxers? Option #1: you play them as characters that the audience feels sorry for. There would be many ways to defend this reading: the characters lack resources. The characters have had poor upbringings. They face inequality. Their education has been less than stellar: one of the Latino characters cannot speak Spanish for which he is mercilessly attacked by a boxer who is good enough to teach him how to count in Spanish. If only someone would help them. Option #2: you play them as fiercely proud individuals for whom no quarter is asked, and none given. They box because that is who they are. No apologies. The boxers are Latino “overmen” who overcome obstacles on their way to their title shots. Option #1 is the more humane reading, but takes away their dignity. Option #2 is full of the spirit of Ares, but glosses over inequality. The character Juan seems to encourage option #1 (he is truly tired of getting punched on his granite chin). The character Manuel, with his no-holds-barred relentless forwards-moving trash-talking style encourages option #2. The true way to play the play, probably lies somewhere in between options one and two. But the wonderful thing about Entrance to Heaven is that it encourages the discussion. The path to the canon lies through controversy.

As it happens, I enjoy both theatre and boxing. Four years ago, I started kickboxing. Last August, I started going to the boxing gym. I used to think the MMA (mixed martial arts) and the boxing gyms were dangerous places, full of savage people. They were places where you had to watch yourself. Now I think different. There are, indeed, savage folks in these places. You can tell just looking at their muscles what they can do. The people with the ripped muscles that are really defined where they connect to the bones are always deadly. There is something about how the muscles connect to the bones that defines how hard someone can hit. The people who are big, but move quickly are perhaps the deadliest. There are brawlers. And then there are assassins, the ones who set traps for you. Fighting some of these people is like playing chess. I used to be nervous going to class. I still am. But after getting to know the people, I came to realize this is their home. This is where they belong. Some of them are amateurs. Some are pros, or at least semi-pro. They make money fighting, coaching, and promoting. It is their life. What I also realized is that the MMA and boxing world is quite diverse. Women champions can make as much as men: think Rhonda Rousey. In UFC, the current heavyweight champ, Francis Ngannou, left Cameroon to pursue his dream. When he started training in France, he was homeless. Now he’s world champ. Look at the referees, fighters, and announcers: they’re from all different backgrounds, ethnicities, and religions. Fighters of all religions, whether Muslim, Jewish, Christian, or others, train side by side. Though they don’t talk about inclusivity, they are inclusive. But they are not known for that. That is too bad. Take a look at your local theatre. I am sure they talk of welcoming and friendship. But how diverse are they? If yes, then great. If not, perhaps they could take a page from the MMA and boxing community. I’m not saying that the fighting community is perfect, but what I am saying is that there is much for communities to learn from one another. When Entrance to Heaven enters the canon, another way I foresee it encouraging different communities to come together is that scholars studying it, scholars who are used to academic references, will have to take a deep dive into boxing to properly analyze it. They will have to watch footage from some of these fights Gonzalez talks about in his “references” section: the action in the play is modelled after specific real-life fights. Who knows, perhaps no one will remember Eubank or Klitschko or Corrales if Gonzalez had not brought them into his play. Don’t laugh. I remember a story of Cus D’Amato training a young Mike Tyson, telling him that one day no one would remember the great boxer and (at that time) world celebrity Jack Dempsey unless they talked of him. Tyson laughed. He couldn’t believe it. But that came true. The only reason I know about Dempsey and started reading about him is because I heard about him through Tyson always bringing him up. Fame works in strange ways.

I should talk about Entrance to Heaven and risk. The point of the competition is to encourage dramatists to dramatize risk. There is plenty of this in Entrance to Heaven: each of the characters is “all-in.” And then, because they have taken on inordinate risk, the unexpected happens. In the case of this play, the unexpected is the realization of the price our dreams exact upon us. Be careful what you wish for. Dreams are funny; they sometimes leave you with nothing. They are full of fire on the way, but after the fires have burnt out, all that remains is smoke and burned out remnants. The thing about risk that Entrance to Heaven taught me most, however, was how much personal risk the playwright undertakes to write an honest play. Just like in Albert Camus’ The Plague where the leading quartet of characters—Rambert, Rioux, Tarrou, and Grand—are aspects of Camus himself, I can’t but imagine that the prizefighters Edgar, Juan, Manuel, and Armando are reflections of Gonzalez. Their struggle is his struggle. Entrance to Heaven is a play full of the most convinced courageousness. It took courage to write it. It makes the play beautiful because it is human. I can’t be sure that I’m right, of course. But, from having followed Franky’s star the last four years and having chatted with him a handful of times, the likelihood is high (here’s a link to an interview we did in 2022 https://www.youtube.com/watch?v=VChvLT8FGrs&t=14s). He has that same drive and ambition his fighters have. I know that because he is one of the few playwrights to have entered the Risk Theatre Competition each year. Sometimes he was be close. When he didn’t take the grand prize, he kept going. Like they say in boxing, you have to keep going because you never know how close you were.

I have read and also seen a reading of another of Gonzalez’s plays, Paletas de Coco. It was a finalist in one of the previous years. It’s about a playwright searching for an absent father. It too was a courageous play that opened my eyes to how risk doesn’t have to be internal to the action. Risk is also beautiful when you can tell the writer has taken risks or wears his heart on his sleeves. The risk in Gonzalez’s plays that is really different than what other people are doing is that there isn’t a separation between his art and his life. They are a unity. Bravo. Usually when playwrights write close to home, they lock their work in a vault, only to be produced so many years from now. Think of O’Neill’s Long Day’s Journey into Night: O’Neill said no performances or publication until twenty-five years after his death. Gonzalez, unlike O’Neill, is putting himself out there, right here, right now. For that reason, it feels like I am witnessing something truly unique and wonderful. I read a lot of plays and Gonzalez is the only living writer I know who breaks down the divide between art and life so relentlessly. I feel he encourages this self-identification in his plays as well. For example, he names one of his fighters Juan David Gonzalez. The dramatic effect of this identification is that when the fighters talk about their dreams and their sacrifices, the audience (or reader) also wonders how much Gonzalez has sacrificed to get to where he is. This double identification deepens the stage and makes risk more palpable. One gets the feeling that Gonzalez talks about risk because he has walked the walk. During our interview, I remember him telling a story of how he went out on a limb, going all-in and using his own money to self-produce a play. I thought: “Here is someone who will go far because he believes in himself and has something to say.”

Another feature of That Must be the Entrance to Heaven that stands out is its language. Stylized. Often, tragedies are written in a rhythmic language that sets it apart from speech. Greek tragedy favours speech in iambic trimeters; Shakespeare likes blank verse. Gonzalez, as well, has adopted a unique playwright’s cant to express his ideas. Each line averages five words. A few are shorter, some are a few words longer. Here’s an example:

Armando. I have come to learn a deep truth.

Every human being,

Whether the mightiest emperor

Or the lowest of nobodies

Is born with a tremendous weight

Pressing down on their souls.

We call that weight our Dreams.

And this life

The whole of our existence

Is spent trying to force that weight

That chimera, that Dream, from our souls.

Each line contains an image or an idea. By setting the lines in quick succession, he builds up great crescendos in thought. For example, Armando’s first six lines builds up to “We call that weight our Dreams.” Then, following that pronouncement, he builds up again to another pronouncement on the effect of our dreams. His technique fascinates me. It really allows devices like repetition to shine. For example:

Juan. I kept losing.

And losing.

And losing.

And losing.

And losing.

And losing.

And losing.

Until my promises

Turned into lies.

The repetition and brevity of each line really allows the concluding pronouncement of “Turned to lies” to hit home. There, too, is risk in the language, of repeating a statement seven times before the taking it home. If, on the stage, the actor pulls it off, it will hit home. In language as in life, no risk, no reward.

Dramatically, That Must be the Entrance to Heaven brings to life a tour de force in risk and unexpected, low-probability, high-consequence events: nothing goes according to plan. I suspect that is why the jurors nominated the play as the 2022 winner. From what I can see on social media, there is great interest in this play: it is the first winning play from the competition that will have a full production (in 2023, next year). Wow. The action promises to be breathtaking. It would be fascinating to have actors with a boxing background play the parts. They could reenact the sequences in a theatre of the great fights Gonzalez draws from: Gatti vs. Ward, Klitschko vs. Joshua, and others. This is the play can make boxing fans out of theatre fans. There is so much in boxing that is theatrical. In one of the Gatti vs. Ward fights, Mickey Ward hit Gatti with one of his trademark liver shots. The shot that makes other fighters black out and crumple to the canvas. I remember that moment to this day. Gatti doesn’t go down. He looks at Ward with such eyes, eyes that say: “Bro, why did you have to hit me so hard??!?” Ward, in turn, doesn’t go in for the kill. He looks at Gatti looking at him. Time in the ring is frozen. They say boxing is a low art. But this moment reminded me of the most beautiful moment in all of literature, when time stands still in the Trojan War back in the old days. Achilles finally meets Hector. The war around them, all the chariots and spears and dust and yelling pauses. On the battlefield it is only them. They chat like friends as they kill one another. Somewhere, far away, Andromache is pouring a hot bath for Hector. He will not require it. The whole scene is unbelievable. All the cosmos stands still for them. But, even though it is unbelievable, it is the most believable thing ever, because it is the most beautiful moment in literature. In That Must be the Entrance to Heaven, during the fights, I experienced this same relaxation of time. It is beautiful. It is seldom that I have seen it in drama. It will take most talented actors to bring this effect about, which, like all the most beautiful moments in art, is the most fragile of things. If it can be pulled off, this will be a play to be remembered, for all time.

For me, what opened my eyes the most was what the play taught me about risk. Not risk as I had been thinking about it. But risk in terms of how much great writers take in drawing from their own lives to produce the most honest and beautiful literatures and dramas. Read and see Gonzalez’s plays. We are witnessing a writer that comes along once a generation. From the black hole, a star is born. But, don’t take my word for it. See for yourself.

– – –

Don’t forget me. I’m Edwin Wong and I do Melpomene’s work.

sine memoria nihil

Edwin Wong has been dubbed “an Aristotle for the 21st century” (David Konstan, NYU) and “independent and provocative” (Robert C. Evans, AUM) for exploring the intersection between risk and theatre. He has published two books (The Risk Theatre Model of Tragedy [2019] and When Life Gives You Risk, Make Risk Theatre [2022]) and over a dozen essays on this topic. In 2022, he was one of three international academics to receive the Ben Jonson Discoveries Award for his work on Shakespeare’s Hamlet. In 2018, he founded the Risk Theatre Modern Tragedy Playwriting Competition, the world’s largest competition for the writing of tragedy (risktheatre.com). Wong has talked at venues from the Kennedy Center and the University of Coimbra to conferences hosted by the National New Play Network, Canadian Association of Theatre Research, Society of Classical Studies, and Classical Association of the Middle West and South. He was educated at Brown University and is on Twitter @TheoryOfTragedy.